使用kubeadm部署Kubernetes1.14.3集群之dashboard ui界面 – 第二章

使用kubeadm部署Kubernetes1.14.3集群 – 第二章

上一篇我们已经使用了kubeadm快速部署了kubernetes v.1.14.3, 本文将介绍如何部署kubernetes ui界面dashboard和集群扩展组件 。

部署kubernetes-dashboard 界面

步骤一: 下载ui yaml文件

[root@linux-master ~]# mkdir ui && cd ui/

[root@linux-master ~]# for i in dashboard-configmap dashboard-controller dashboard-rbac dashboard-secret dashboard-service ;do

wget https://raw.githubusercontent.com/kubernetes/kubernetes/master/cluster/addons/dashboard/$i.yaml ;done

步骤二: 修改dashboard-service.yaml , 将服务暴露方式改为NodePort

apiVersion: v1

kind: Service

metadata:

name: kubernetes-dashboard

namespace: kube-system

labels:

k8s-app: kubernetes-dashboard

kubernetes.io/cluster-service: "true"

addonmanager.kubernetes.io/mode: Reconcile

spec:

selector:

k8s-app: kubernetes-dashboard

# 增加该字段

type: NodePort

ports:

- port: 443

targetPort: 8443

# 暴露端口

nodePort: 31443

步骤三: 修改dashboard-controller.yaml

由于国内无法访问k8s.gcr.io, 我们需要修改image镜像地址为国内镜像。 这里可以使用小编提供的镜像地址registry.cn-hangzhou.aliyuncs.com/kubernetes-base/kubernetes-dashboard-amd64:v1.10.1

执行kubectl apply -f 应用配置文件

[root@linux-master ui]# kubectl apply -f .

configmap/kubernetes-dashboard-settings created

serviceaccount/kubernetes-dashboard created

deployment.apps/kubernetes-dashboard created

role.rbac.authorization.k8s.io/kubernetes-dashboard-minimal created

rolebinding.rbac.authorization.k8s.io/kubernetes-dashboard-minimal created

secret/kubernetes-dashboard-certs created

secret/kubernetes-dashboard-key-holder created

步骤四: 获取token

[root@linux-master ui]# kubectl -n kube-system get secret | grep kubernetes-dashboard-token

kubernetes-dashboard-token-cvvtq kubernetes.io/service-account-token 3 11m

[root@linux-master ui]# kubectl describe -n kube-system secret kubernetes-dashboard-token-cvvtq

Name: kubernetes-dashboard-token-cvvtq

Namespace: kube-system

Labels: <none>

Annotations: kubernetes.io/service-account.name: kubernetes-dashboard

kubernetes.io/service-account.uid: a2a1a323-8da3-11e9-870b-000c290ee452

Type: kubernetes.io/service-account-token

Data

====

ca.crt: 1025 bytes

namespace: 11 bytes

token: eyJhbGciOiJSUzI1NiIsImtpZCI6IiJ9.eyJpc3MiOiJrdWJlcm5ldGVzL3NlcnZpY2VhY2NvdW50Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9uYW1lc3BhY2UiOiJrdWJlLXN5c3RlbSIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VjcmV0Lm5hbWUiOiJrdWJlcm5ldGVzLWRhc2hib2FyZC10b2tlbi1jdnZ0cSIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VydmljZS1hY2NvdW50Lm5hbWUiOiJrdWJlcm5ldGVzLWRhc2hib2FyZCIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VydmljZS1hY2NvdW50LnVpZCI6ImEyYTFhMzIzLThkYTMtMTFlOS04NzBiLTAwMGMyOTBlZTQ1MiIsInN1YiI6InN5c3RlbTpzZXJ2aWNlYWNjb3VudDprdWJlLXN5c3RlbTprdWJlcm5ldGVzLWRhc2hib2FyZCJ9.GaDEovwqgjobh6Ovi5b1d8tskGoW_dWBHwUFl4mtjRpZVJ-FJipQDy3-oXjrChY41i4LYTjE2ZV3pQL64GlsyFzb5iycDEVa1RSi_CSdotKDNixCzYWH_Z9I-IgM5moMfJvdBZoIPN2psv4Go_rbSKpGXeZRZiNgprW3Oa_jWVv8ckDfIqSAZ1OaDsv4RHRXQWsff3Lp2mgXQukHofW_9aDMkaONc-IbAN4_r9ApKdkFRm9GJMnJ9rFo4limI_5JC0Z0qdZs_87ESimccn5G5CZv05_WFeCuWD5j10srvG9lQEy5YbmhvFgo0iB_gdzimdGdIWaVIkFf1Poc9Vd0gA

由于默认的token权限不够, 登陆ui会提示警告。 这里我们为ui创建ServiceAcoount, 并将账号绑定到ClusterRole角色上, 使该账号拥有集群所有权限

[root@linux-master ui]# vi dashboard-admin-ui.yaml

apiVersion: v1

kind: ServiceAccount

metadata:

labels:

k8s-app: kubernetes-dashboard

name: dashboard-admin-ui

namespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: dashboard-admin-ui

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: cluster-admin

subjects:

- kind: ServiceAccount

name: dashboard-admin-ui

namespace: kube-system

应用yaml文件

[root@linux-master ui]# kubectl apply -f dashboard-admin-ui.yaml

serviceaccount/dashboard-admin-ui created

clusterrolebinding.rbac.authorization.k8s.io/dashboard-admin-ui created

[root@linux-master ui]# kubectl get secret -n kube-system |grep dash

dashboard-admin-ui-token-27c9c kubernetes.io/service-account-token 3 53s

kubernetes-dashboard-certs Opaque 0 19m

kubernetes-dashboard-key-holder Opaque 2 19m

kubernetes-dashboard-token-cvvtq kubernetes.io/service-account-token 3 19m

[root@linux-master ui]# kubectl describe secret -n kube-system dashboard-admin-ui-token-27c9c

Name: dashboard-admin-ui-token-27c9c

Namespace: kube-system

Labels: <none>

Annotations: kubernetes.io/service-account.name: dashboard-admin-ui

kubernetes.io/service-account.uid: 43126981-8da6-11e9-870b-000c290ee452

Type: kubernetes.io/service-account-token

Data

====

token: eyJhbGciOiJSUzI1NiIsImtpZCI6IiJ9.eyJpc3MiOiJrdWJlcm5ldGVzL3NlcnZpY2VhY2NvdW50Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9uYW1lc3BhY2UiOiJrdWJlLXN5c3RlbSIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VjcmV0Lm5hbWUiOiJkYXNoYm9hcmQtYWRtaW4tdWktdG9rZW4tMjdjOWMiLCJrdWJlcm5ldGVzLmlvL3NlcnZpY2VhY2NvdW50L3NlcnZpY2UtYWNjb3VudC5uYW1lIjoiZGFzaGJvYXJkLWFkbWluLXVpIiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9zZXJ2aWNlLWFjY291bnQudWlkIjoiNDMxMjY5ODEtOGRhNi0xMWU5LTg3MGItMDAwYzI5MGVlNDUyIiwic3ViIjoic3lzdGVtOnNlcnZpY2VhY2NvdW50Omt1YmUtc3lzdGVtOmRhc2hib2FyZC1hZG1pbi11aSJ9.TTTB6qTRz8AVJwsNjZVUlxZJkObqsJFd6QEzVJ9NiPwZefhhTz42PHGUAPxo3gMb__9g69JAtOEA4NUHh1eS4RGnmnhdSlw_7pKM0CxPdfT7kokpSuFizyOh08i65g5o9FbO-3tEiiBkv_fHzmxfM6jbuDAo2SWBgby7JHY-31fxZj73wLLjXGBN13JR0YEI9ownl75rZRZ_XMakbJvzpGXTM-egHW9I2D5zlvLQmsCGiB1uv3W8-xLeUShXolfuh18McGP5mEkiFpL0a2lMRbbP0ymkgohMtU6c50kgRLX1No3b9waSZ-IwuFWqLmyPDHO9Zw7e57kUoSP-uzPULA

ca.crt: 1025 bytes

namespace: 11 bytes

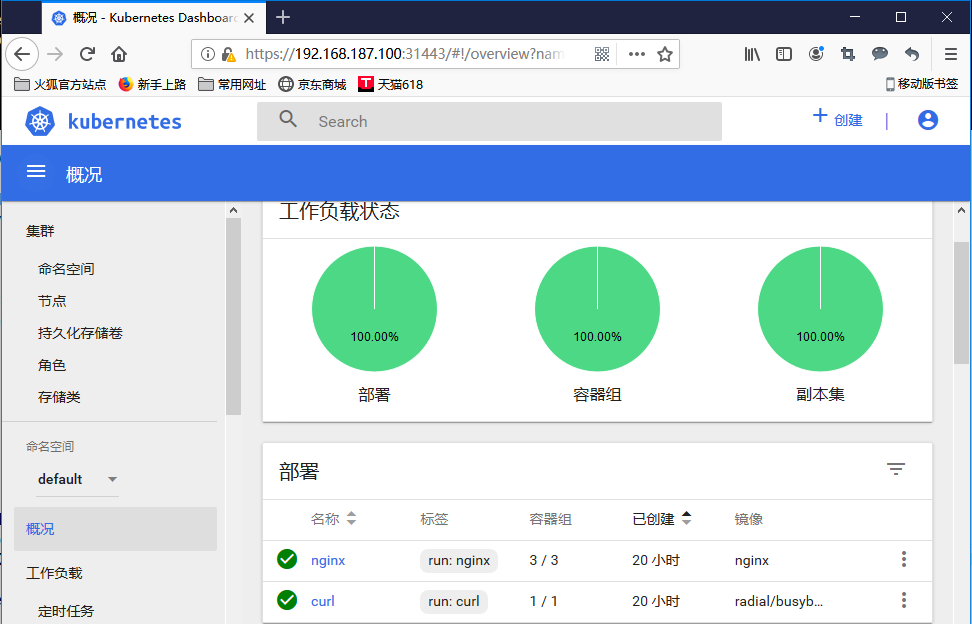

访问node节点IP + 31443登陆ui, 这里可能会有些问题。由于https证书问题只能用火狐浏览器访问, 需要自行生成证书并替换原有的dashboard secret

[root@linux-master ui]# kubectl get svc -n kube-system

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

calico-typha ClusterIP 10.100.0.140 <none> 5473/TCP 20h

kube-dns ClusterIP 10.100.0.10 <none> 53/UDP,53/TCP,9153/TCP 22h

kubernetes-dashboard NodePort 10.100.70.88 <none> 443:31443/TCP 32m

使用我们刚才创建token即可登陆ui啦, 是不是非常开心呢。

部署Helm

微服务和容器化给复杂应用部署与管理带来了极大的挑战。Helm是目前Kubernetes服务编排领域的唯一开源子项目,做为Kubernetes应用的一个包管理工具,可理解为Kubernetes的apt-get / yum,由Deis 公司发起,该公司已经被微软收购。

Helm通过软件打包的形式,支持发布的版本管理和控制,很大程度上简化了Kubernetes应用部署和管理的复杂性。

步骤一: 下载helm二进制包

[root@linux-master 壯陽藥

">~]# wget http://k8s.ziji.work/helm/v2.13.1/helm-v2.13.1-linux-amd64.tar.gz

[root@linux-master ~]# tar -zxvf helm-v2.13.1-linux-amd64.tar.gz

linux-amd64/

linux-amd64/LICENSE

linux-amd64/tiller

linux-amd64/helm

linux-amd64/README.md

[root@linux-master ~]# cd linux-amd64/

[root@linux-master linux-amd64]# cp helm /usr/local/bin/cp helm /usr/local/bin/

步骤二: 创建RBAC权限

[root@linux-master linux-amd64]# vim rbac-config.yaml

apiVersion: v1

kind: ServiceAccount

metadata:

name: tiller

namespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1beta1

kind: ClusterRoleBinding

metadata:

name: tiller

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: cluster-admin

subjects:

- kind: ServiceAccount

name: tiller

namespace: kube-system

[root@linux-master linux-amd64]# kubectl create -f rbac-config.yaml

serviceaccount/tiller created

clusterrolebinding.rbac.authorization.k8s.io/tiller created

步骤三: 使用helm部署tiller

[root@linux-master linux-amd64]# helm init --service-account tiller --tiller-image registry.cn-hangzhou.aliyuncs.com/kubernetes-base/helm-tiller:v2.13.1 --skip-refresh

Creating /root/.helm

Creating /root/.helm/repository

Creating /root/.helm/repository/cache

Creating /root/.helm/repository/local

Creating /root/.helm/plugins

Creating /root/.helm/starters

Creating /root/.helm/cache/archive

Creating /root/.helm/repository/repositories.yaml

Adding stable repo with URL: https://kubernetes-charts.storage.googleapis.com

Adding local repo with URL: http://127.0.0.1:8879/charts

$HELM_HOME has been configured at /root/.helm.

Tiller (the Helm server-side component) has been installed into your Kubernetes Cluster.

Please note: by default, Tiller is deployed with an insecure 'allow unauthenticated users' policy.

To prevent this, run `helm init` with the --tiller-tls-verify flag.

For more information on securing your installation see: https://docs.helm.sh/using_helm/#securing-your-helm-installation

Happy Helming!

等待一会, 我们可以看到helm已经运行起来了

[root@linux-master linux-amd64]# kubectl get pod -n kube-system -l app=helm

NAME READY STATUS RESTARTS AGE

tiller-deploy-5467bc979-9klf9 1/1 Running 0 49s

使用Helm部署metrics-server

从Kubernetes 1.11开始,Heapster被标记为已弃用。建议用户使用 metrics-server。 heapster从Kubernetes 1.12开始将从Kubernetes各种安装脚本中移除。

Kubernetes推荐使用metrics-server。我们这里也使用helm来部署metrics-server。

步骤一: 创建metrics-server.yaml

[root@linux-master ~]# vi metrics-server.yaml

args:

- --logtostderr

- --kubelet-insecure-tls

- --kubelet-preferred-address-types=InternalIP

步骤二: 更换helm源

helm repo remove stable

helm repo add stable https://kubernetes.oss-cn-hangzhou.aliyuncs.com/charts

helm repo update

helm search

helm install stable/metrics-server

-n metrics-server

--namespace kube-system

-f metrics-server.yaml

遗憾的是,当前Kubernetes Dashboard还不支持metrics-server。因此如果使用metrics-server替代了heapster,将无法在dashboard中以图形展示Pod的内存和CPU情况。